Introducing Orchestra

November will mark the 10-year anniversary of Amazon’s Mechanical Turk, which introduced the concept of a microtask to the world.

On the flipside, microtask labor dehumanizes the humans doing the work. It commoditizes the features that separate humans from machines: Click on the correct photo, and make sure to do it fast. Describe the product in one sentence, not two. We’re training algorithms to replace you, so we’ve filtered all of the nuance and ambiguity out of the work that might have made it interesting. Rather than nurturing and celebrating subject matter expertise as people work on more substantial tasks, the microtask model of doing work has turned work into the smallest bite-sized pieces that anyone can complete with little training.

And so…

Hello, Orchestra!

There are many other uses we can imagine ranging from recruiting and conference planning to managing various legal workflows at a law firm. We’re even thinking of ways Orchestra can be used in some of the most creative fields, like design, and some of the most analytical, like data science.

What made it into v0.1.0

-

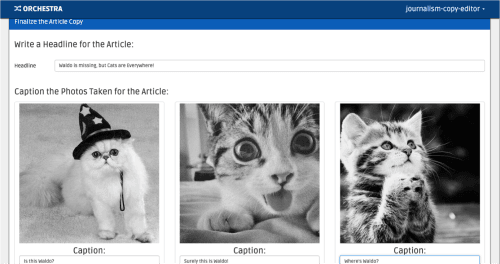

Workflows with humans and machines. Orchestra allows you to define workflows through which experts can contribute work and algorithms can contribute computation. This allows, say, a photographer to upload a bunch of photos, and an autocropping algorithm to resize those photos before a copy editor provides captions for them. In addition to a tutorial workflow in our getting started in 5 minutes guide we provide an illustrative example of the following workflow implemented in Orchestra:

-

Review hierarchies. Knowledge work necessitates feedback. From code reviews to design critiques, professionals build review into their process. Orchestra allows a workflow designer to specify that particular workflow steps should be serially reviewed by any number of experts, so that everyone from apprentices to skilled reviewers can learn from someone else.

-

Certifications. Photographers and reporters are often not the same person. Experts in Orchestra receive certification to do certain types of tasks, and each step in a workflow can specify which certifications are required to complete it.

-

Slack/Google Drive integration. Teams in Orchestra collaborate using a number of tools. Orchestra’s Slack integration invites team members to a private Slack group as they join a project. It also does things like automatically creating Google Drive folders so teammates have places to share their work.

-

Project API. Orchestra exposes an HTTP API to create and monitor projects as experts and algorithms contribute to them. This allows other services to kick off projects, report on their progress, and pull structured data out of them after they finish.

The limits of technology

Orchestra is technology, but digital labor is a sociotechnical concern. While we built Orchestra because we believe that the infrastructure to empower distributed teams was lacking, software will only solve some of these problems. Some of the hardest problems we face daily are not technical at all, and we are both humbled and excited to participate in the discussion around the social parts of the infrastructure. One example challenge is in the world of recruiting, vetting, and onboarding experts, which is just as hard to scale and has just as many domain-specific nuances as it ever did.

You should realize that by using this software, you’re going to run head-first into topics like labor models, organizational psychology, and operations research. Tread lightly, and remember that Orchestra, and technology more broadly, is not a silver bullet to address all of these considerations.

Getting involved

We can’t wait to see what you do with Orchestra. If we can help with anything, please reach out. Here are a few quick links to get you started:

Read next

See all

Introducing B12 3.0

Turn a short prompt into a complete website, store, or web app you can shape however you imagine.

Read now

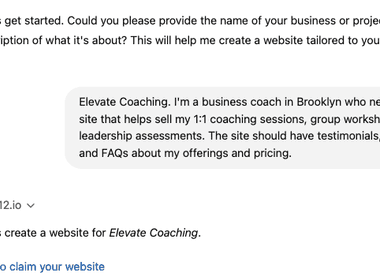

B12’s AI Agent: Take the work out of your website

Improve your website just by typing what you want

Read now

Trusted by 1M+ users: Our most personalized Website Generator yet

Build custom websites faster, add new pages in seconds, and manage multiple sites effortlessly

Read now